Topics

materiality

fitness-for-purpose

iterative design

goal emergence

improvisation

ANT

responsive design

user-centeredness

human-centeredness

design

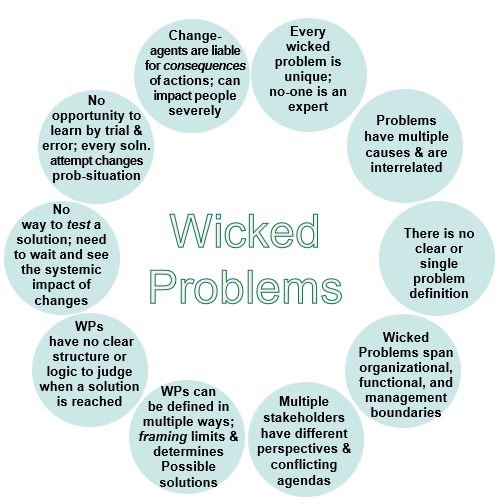

wicked problems

sensitization

iterative/recursive design

human-activity systems

History-of-design

form-follows-function

design patterns

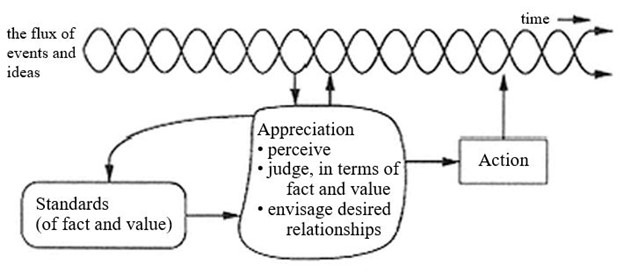

appreciative design

actor-network theory

systemic thinking

UX

organizational change

serendipity

HCI

SSM

human-centered design

socio-technical design

performativity

trajectory

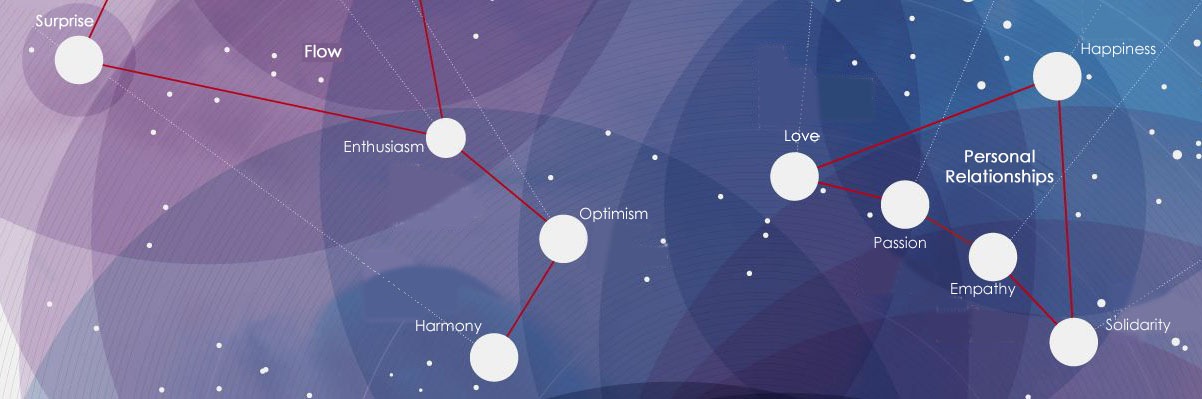

networks of practice

NSF